But as I was saying, people who do bypass it are being abusive and should be banned. The robots exclusion thing is a web standard so most developers try to obey it even if they allow methods to bypass it. Only disable robots.txt rules with great care I checked on the httrack website and it has an option too. There is a preference in the program to ignore the file. I have one that I use on some sites (not elysiun) and it will on occasions just download a robots.txt file. Personal websucking tools try to obey the rules too.

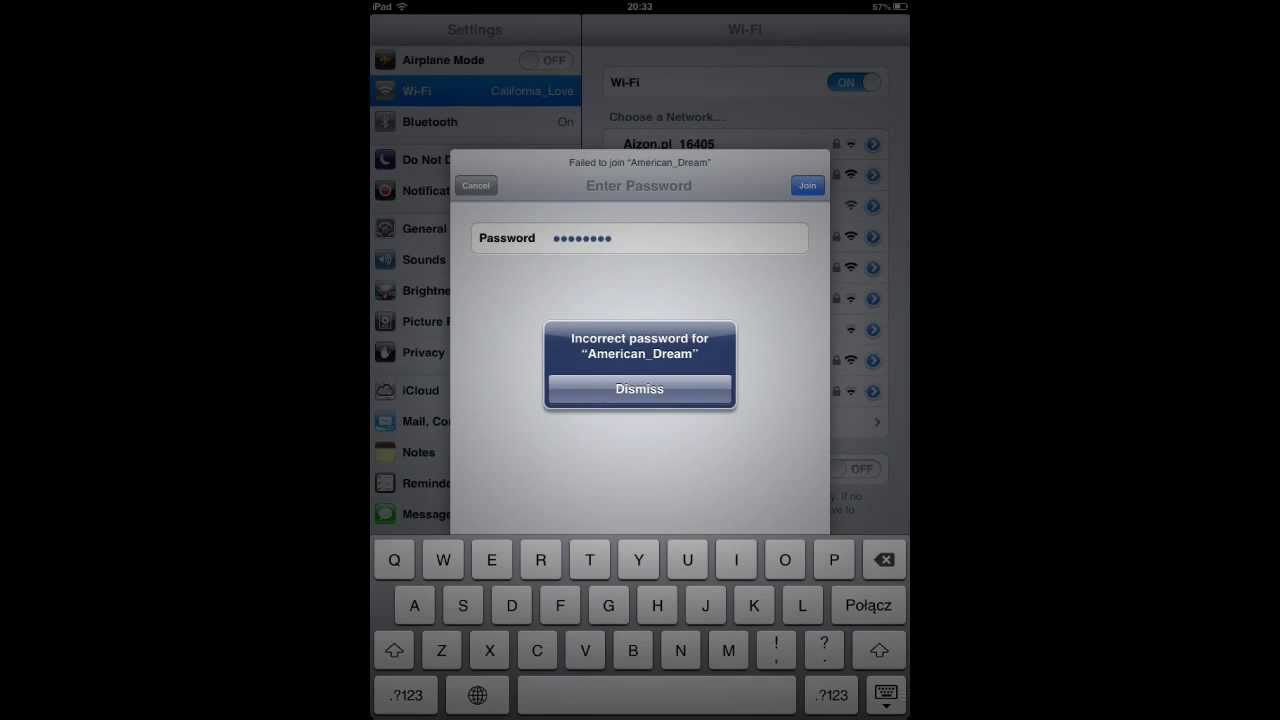

It is possible to specificaly ban all websucking tools (that identify themselves as such), but that makes up quite a list and would actually add more overhead. The problem is not robots since they tend to behave responsibly, the problem is persons that use websucking tools. User-agent: Mozilla/3.01 (hotwired-test/0.1)Īye robots.txt won’t help. You can even put a message in the file explaining to them why their download failed - you use a # for comments. The robots.txt should deter most bots because a lot of them don’t have the option to ignore the robots.txt file. However, like I say, even if you do this, there are robots with the option to ignore the robots.txt file but of course people who deliberately do so should have their ip banned. There is a list of robots at google and you may want to add more than just google: If you just want google to index the site, you can do:ĭisallow: put directories containing things like php scripts hereīasically just disallow all bots with the first two lines and have rules for specific bots after it. This would have the names of bots that you would disallow.

The first step would be to place a file called robots.txt in the Elysiun root directory.

There are ways to help stop spiders but a lot of spiders have features to bypass them so they are not always useful.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed